What is Conflux?

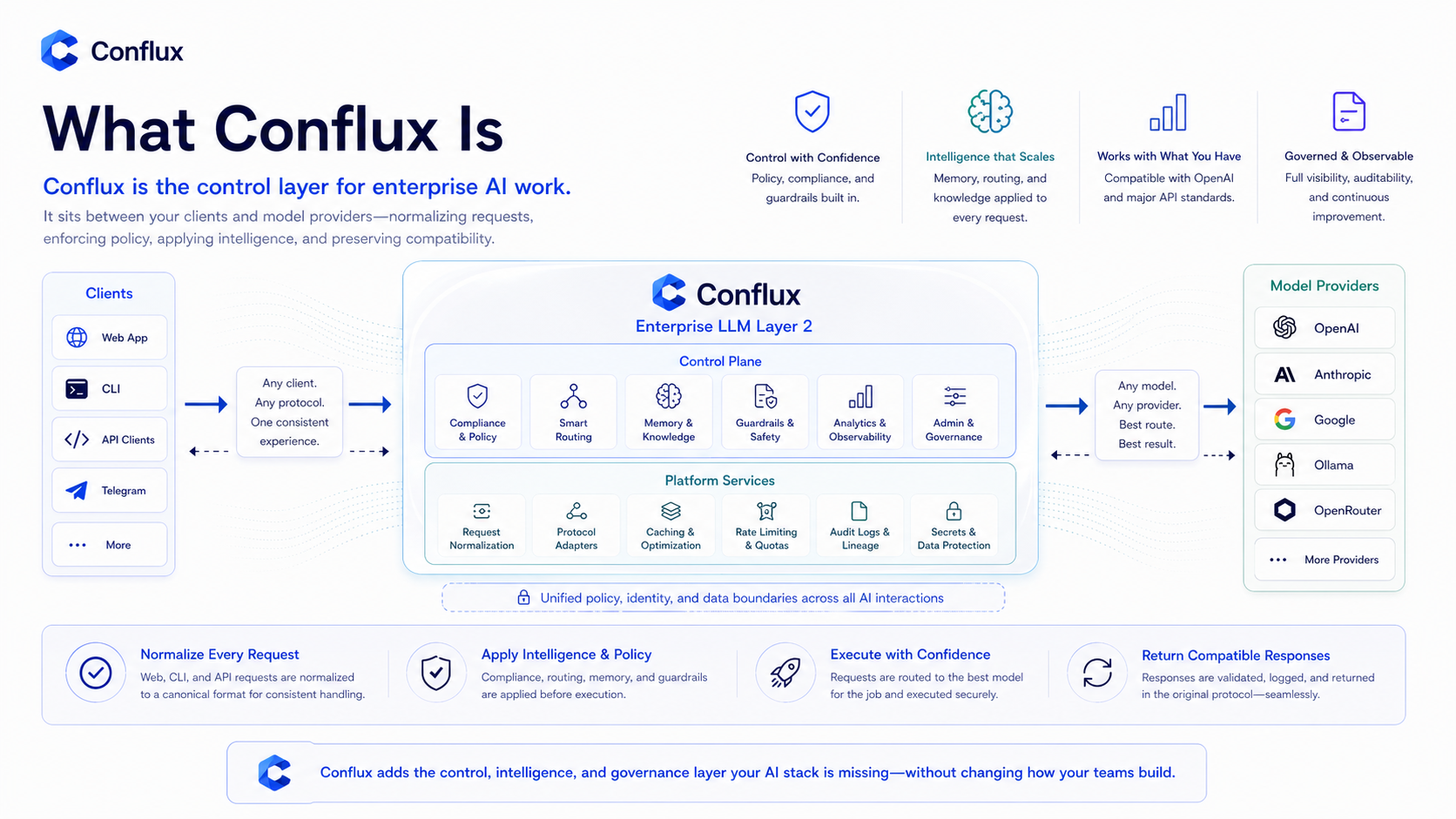

Conflux is an enterprise LLM Layer 2 for governed AI workspaces.

Click to enlarge

Click to enlargeIf you are new to Conflux, read Quickstart, Execution modes, Memory overview, and API overview next. Those pages explain how Conflux feels as a user-facing product before going deeper into governance.

Why it exists

Teams now use AI through web chat, APIs, Claude CLI, Codex CLI, and custom tools. Without a shared control layer, every request becomes isolated: no common policy, no workspace memory, no route visibility, and no durable understanding of what the team has already built or decided.

Conflux sits between users, clients, and models. It gives every workspace one governed runtime for policy, memory, routing, model execution, and analytics.

Platform layers

Who uses it

Conflux is for teams that want AI to behave like a governed workspace tool, not a collection of disconnected chat sessions. It is useful for engineering, product, legal, accounting, operations, support, and any team that needs shared context, traceability, and model control.